Adding a Source and Destination Connection

In order to extract your data, you need to add a source and a destination connection.

- Adding a source connection specifies the data source on which your data will be retrieved through the extraction process. Once the data is taken from the source connection, it needs a location to be stored, hence the destination connection.

- Adding a destination connection specifies the place in which your data will be integrated in order to be read once transformed.

To add a source or a destination connection:

-

Click the Connections icon on the left-hand side.

-

Click New next to Source Connections or Destination Connections.

-

Select the type of data source you want to use.

Source connections

Destination connections

Active Directory

Acumatica

Custom API

Flat File

Google Analytics

Google Sheets

GraphQL

HubSpot

LDAP

MongoDB

Microsoft Access

Microsoft Excel

MySQL

NetSuite

OData

ODBC

Oracle

PostgreSQL

Quickbooks Desktop

Quickbooks Online

Sage Intacct

Sage 50 UK

Salesforce

SAP HANA

SQL Azure

SQL RDS

SQL Server

Flat File

Oracle

Snowflake

SQL Azure

SQL RDS

SQL Server

Web API source connections

Web API source connections

Aweber

Accelo

AdRoll

AfterShip

AgileCRM

Aha!

Aircall

Amplitude

Autopilot

Avaza

BambooHR

BenchmarkEmail

Billsby

Bugsnag

Calendly

CampaignMonitor

Campaigner

CapsuleCRM

ChargeOver

Chargebee

ClickTime

ClickUp

Clio

Close CRM

Codat

Coinbase

ConstantContact

Contactually

ConvertKit

Copper CRM

Delighted

Dotmailer

Drift

Drip

Egnyte

Elorus

EmailOctopus

Eventbrite

Everhour

Figshare

Float

Flowlu

FreeAgent

Freshsales

Freshservice

Freshteam

Front

GetResponse

Gorgias

Grafana

Harvest

HelloSign

Helpscout

Hive

Hubstaff

Humanity

iContact

Infusionsoft

Insightly

Intercom

Invoiced

JotForm

Jumpseller

Klaviyo

Klipfolio

LaunchDarkly

LeadDesk

LightSpeedRetail

LionDesk

MailerLite

Mailjet

MessageBird

Mode

Moosend

Mouseflow

Nethunt

Omnisend

OnePageCrm

Ontraport

Opsgenie

Optimizely

PabblySubscriptions

PagerDuty

Paymo

Pingdom

Pipeline CRM

Pipeliner CRM

PivotalTracker

Planio

PolarTeamPro

Printful

Printify

Procore

Quaderno

Rebilly

Recurly

Retently

SageHR

Samanage

SendInBlue

SendPulse

Sendwithus

ShipEngine

Shippo

SpaceX

SparkPost

Stack Exchange

Tanda

Teamgate

Teamwork

Testmonitor

Tickspot

Timely

Todoist

Toggl

Typeform

Unbounce

VWO

Wistia

Wrike

Yelp

ZarMoney

ZendeskSell

Zenefits

ZohoDesk

ZohoInventory

ZohoInvoice

ZohoMarketingHub

ZohoPeople

ZohoProjects

ZohoSubscriptions

Zoom

Your Custom API

Note: Versions prior to SQL Server 2008 R2 are not supported.

-

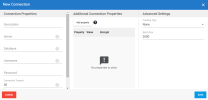

The New Connection dialog box is displayed. Enter the parameters in the following panels: Connection Properties, Additional Connection Properties and Advanced Settings as described below.

- Click Save to finish.

| Setting | Description |

|---|---|

|

Connection Properties |

According to the connection selected, the fields will be different from one connection to another due to their characteristics. For more information about each source connection type, see Source Connector Settings. Tool tips located next to each field are available to help you setting up some fields. Feel free to take a look to get more details if needed. |

|

Additional Connection Properties This panel can be used to add specific properties to the connection string. |

|

| Add Property |

Click this button if you want to specify some properties for this connection. For each property you add, you can define if you want it to be encrypted or not (i.e. visible). If you choose Encrypted, the value of the property (ex: password) will not be shown on the screen and will be encrypted in the back end. |

| Property | Enter a connection string which defines the action you want to perform (ex: READ). |

| Value |

According to the property you entered, you can set the state (for example : TRUE or FALSE). |

| Encrypt |

Select this option if you want to encrypt data related to the property. |

| Delete |

Click on the X sign to delete the property. |

|

This panel is available for some source connections. |

|

| Tracking Type |

Date: the synchronization between the source and the destination will be incremental as it will be based on the latest update values of the DateTime entry of each row. Note: Sage X3 for instance used the field UPDDATTIM_0 as DateTime while in Sage 300 the DateTime is divided into 2 separate fields (AUDTDATE and AUDTTIME). For the case of Sage 300, you will have to create a calculated field first to merge these fields then to convert them into a "real" DateTime field in order to work properly. |

|

Time Zone (if you select Date as tracking type |

Defines the region and time zone that will be used by the source connection. |

|

Time Offset (if you select Date as tracking type) |

Adds an offset to the source connection that will be used for Incremental Load job (Minimal value is 0 and maximum 3600 seconds). |

|

Batch Size |

The batch size represents the quantity of data read, transform and load in the destination at the same time. To set the value, remember that the bigger the batch size is the bigger the memory usage will be and the faster it will be up to an extend. The default value is 2000 and the maximum value should not be set to more than 10 000. Based on your network speed and disk performance, you can experiment which size of block would be faster for you. Usually the default value (2000 records) works fine. |

If DataSync is not installed on the same server as your ERP application, please check that the minutes and seconds in the two servers match. Use the Time Offset feature (in seconds) if there is a difference. You can enter a number greater than the difference to ensure optimal data retrieval during an Incremental Load task.

A client imports an Excel file that has a lot of transactions (1000 rows).

While processing the transactions with a job, a small time gap exists during the time period between when the job has finished submitting the rows and when the system has stamped the last success date (DateTime.Now (meaning the current time)).

During that time period, imagine that a few rows have been added (for example, 5 rows, so there are now 1005 rows) and the client does a refresh (Incremental Load). Since the refresh is based on the last success date, it will skip the 5 new rows that came after the job has finished but before the timestamp was done by the system.

Adding an offset to the connection can fix that problem because it removes the time gap.